# Kafka Source

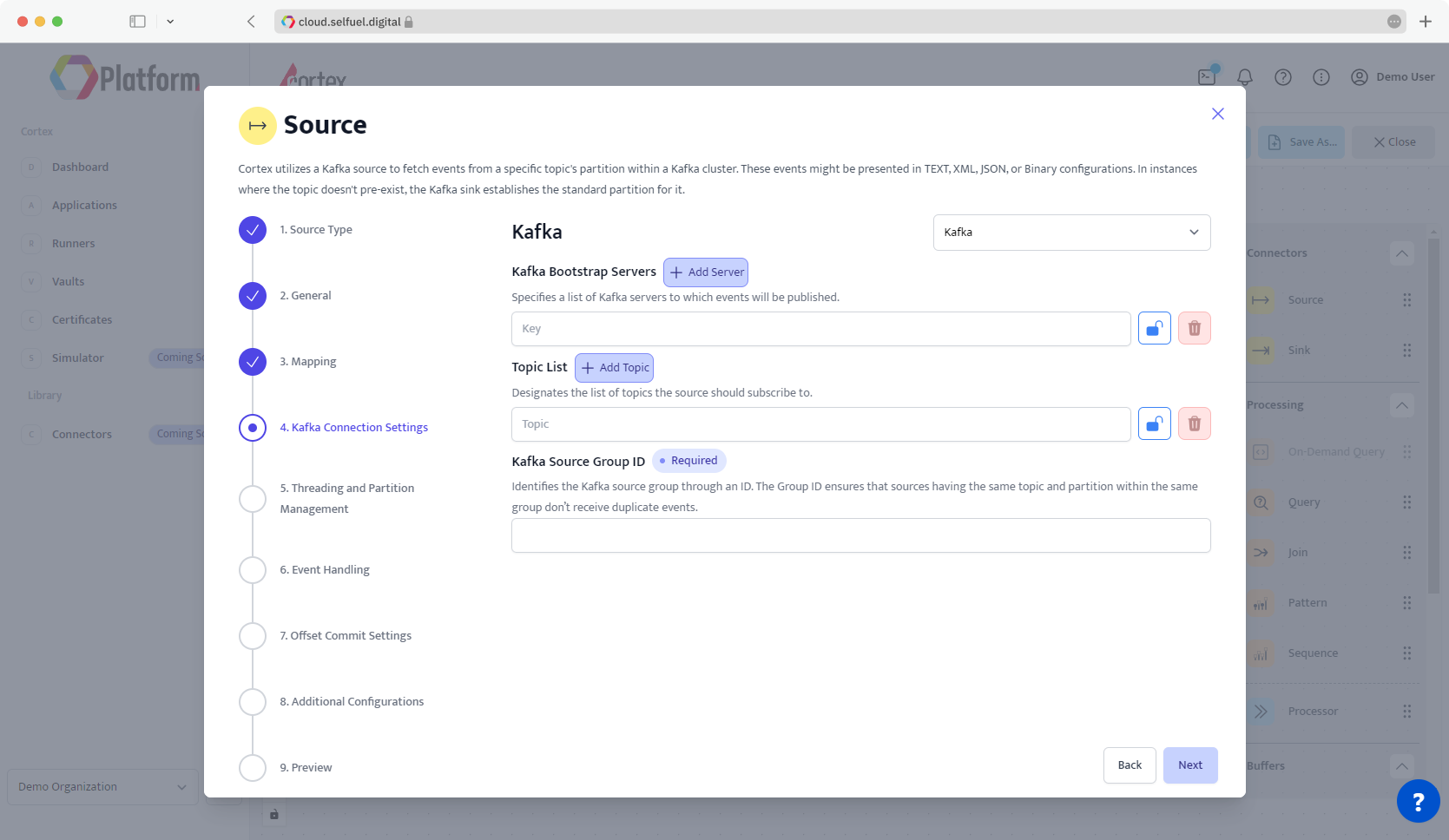

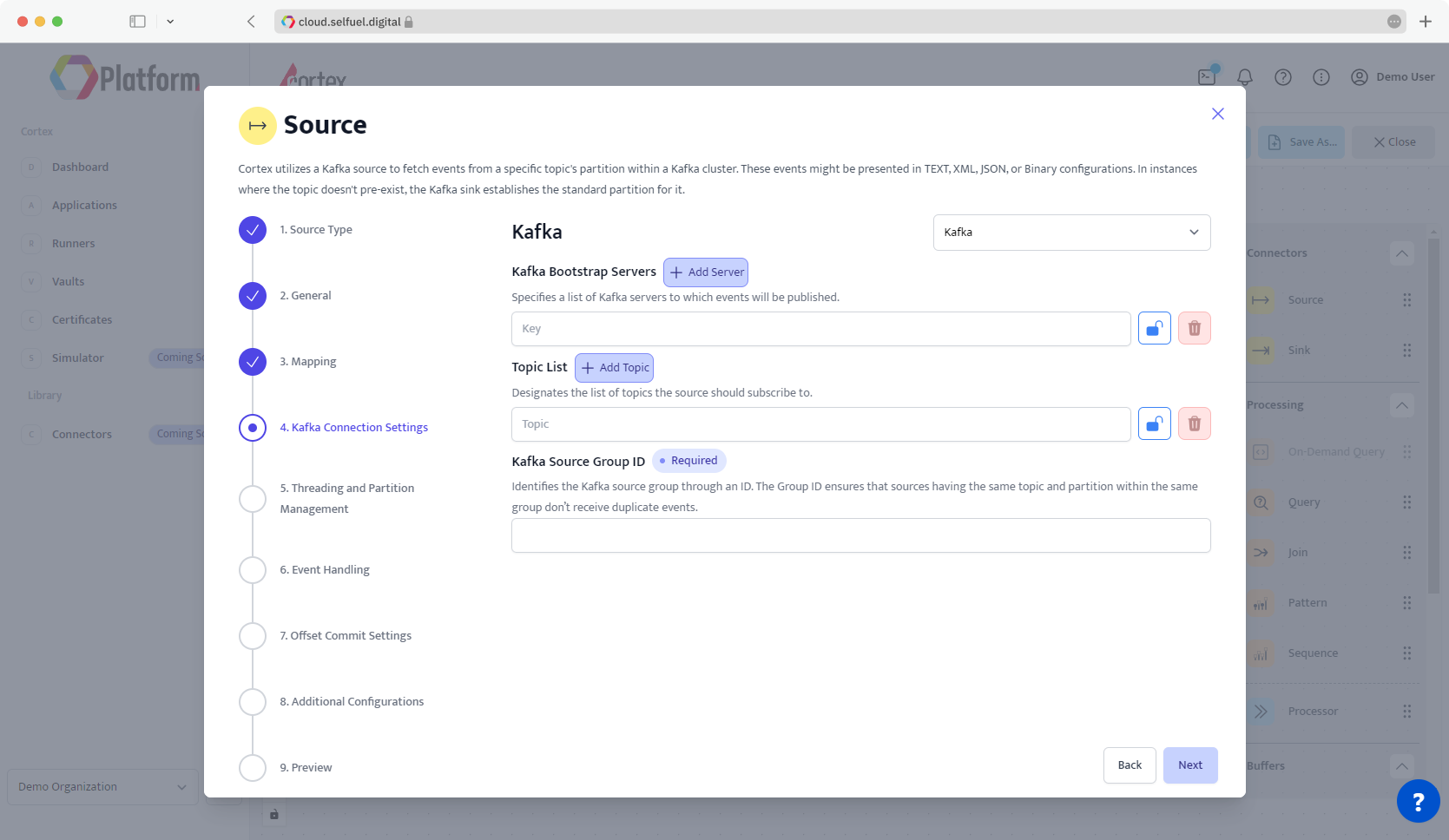

## Step 4 - Kafka Connection Settings

### Kafka Bootstrap Servers

Indicates a list of Kafka servers the Kafka source should connect to. This list should be provided as comma-separated values

***e.g.*** `localhost:9092, localhost:9093`

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| | STRING |

### Topic List

Indicates the list of topics the source should subscribe to. This list should be provided as comma-separated values.

***e.g.*** `topic_one, topic_two`

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| | STRING |

### Kafka Source Group ID

Identifies the Kafka source group through an ID. The Group ID ensures that sources having the same topic and partition within the same group don’t receive duplicate events.

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| | STRING |

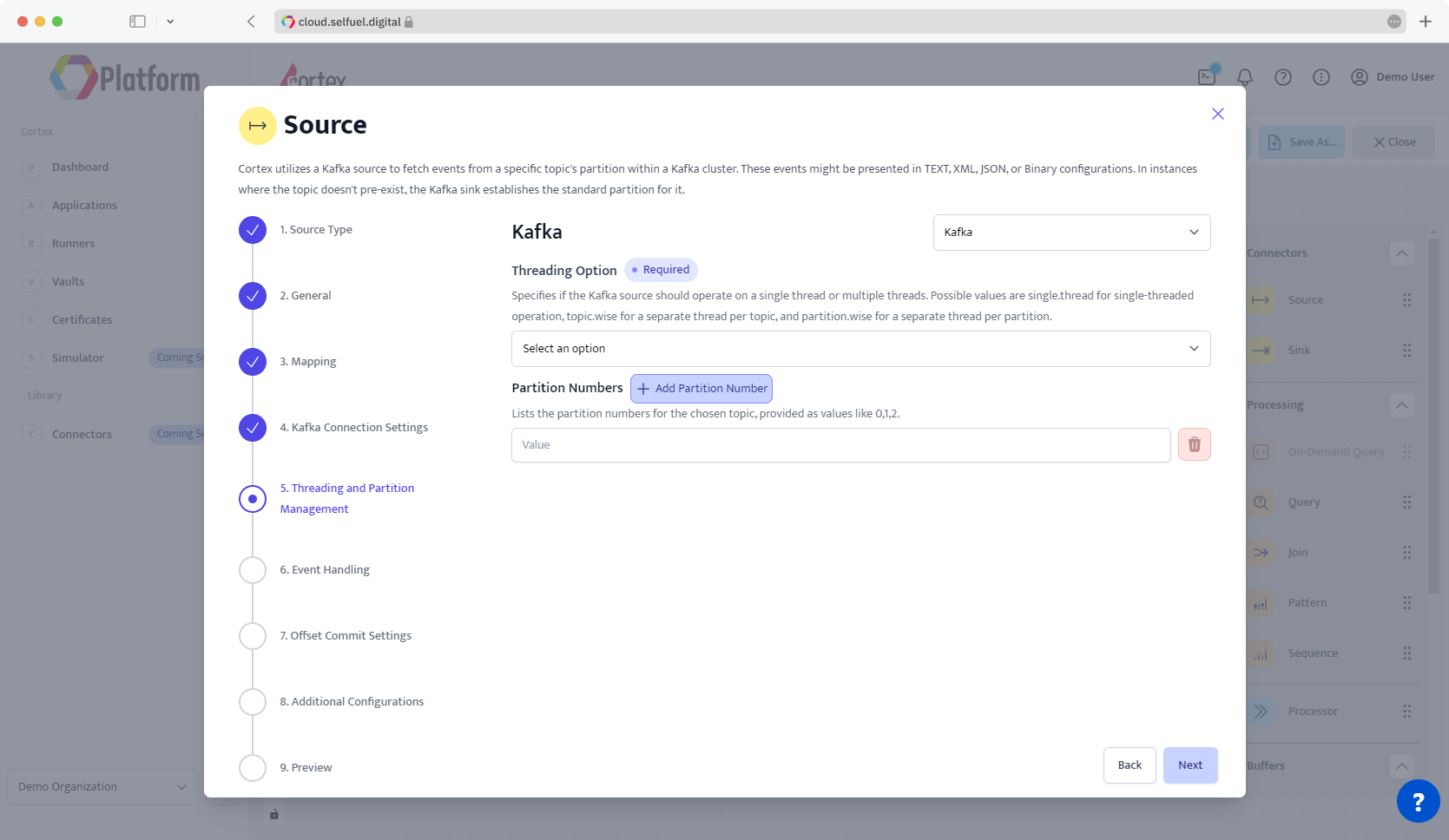

## Step 5 - Threading and Partition Management

### Threading Options

Specifies if the Kafka source should operate on a single thread or multiple threads. Possible values are

* Single Thread: o run the Kafka source on a single thread.

* Topic-wise: To use a separate thread for each topic.

* Partition-wise: To use a separate thread for each partition.

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| | |

### Partition Numbers

Indicates a list of the partition numbers for the chosen topic. This list should be provided as comma-separated values.

***e.g.*** `0, 2, 4`

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| 0 | STRING |

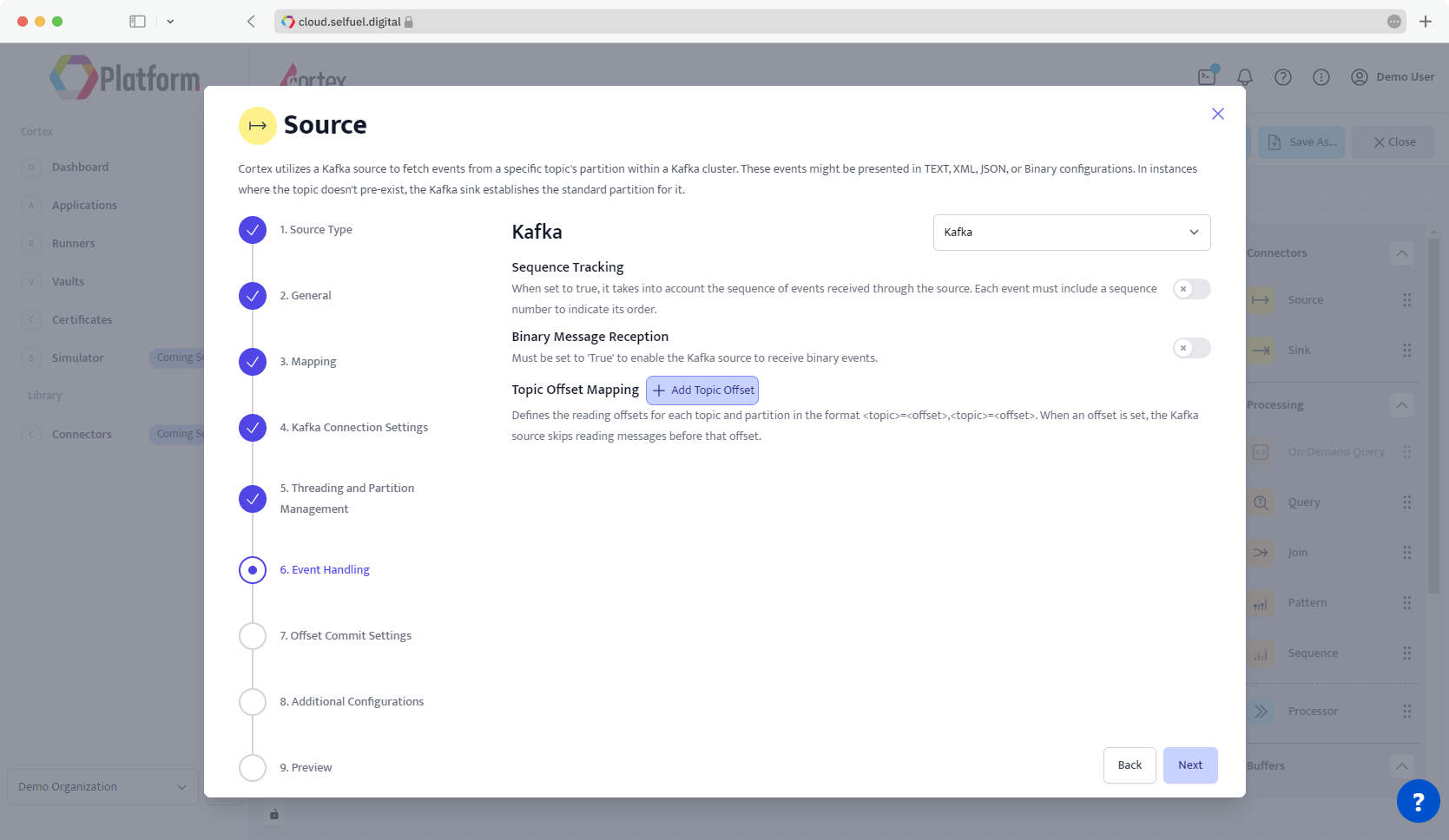

## Step 6 - Event Handling

### Sequence Tracking

When set to ON, it takes into account the sequence of events received through the source. Each event must include a sequence number to indicate its order.

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| OFF | STRING |

### Binary Message Reception

Must be set to ON to enable the Kafka source to receive binary events.

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| OFF | STRING |

### Topic Offset Mapping

Defines the reading offsets for each topic and partition in key value pairs. When an offset is set, the Kafka source skips reading messages before that offset.

{% hint style="info" %}

Unless an offset is defined for a specific topic; Cortex reads messages from the very beginning.

{% endhint %}

***e.g.*** `reading2=20, temperature=500` reads from the 21th message of the `reading2` topic, and from the 501th message of the `temperature` topic.

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| | STRING |

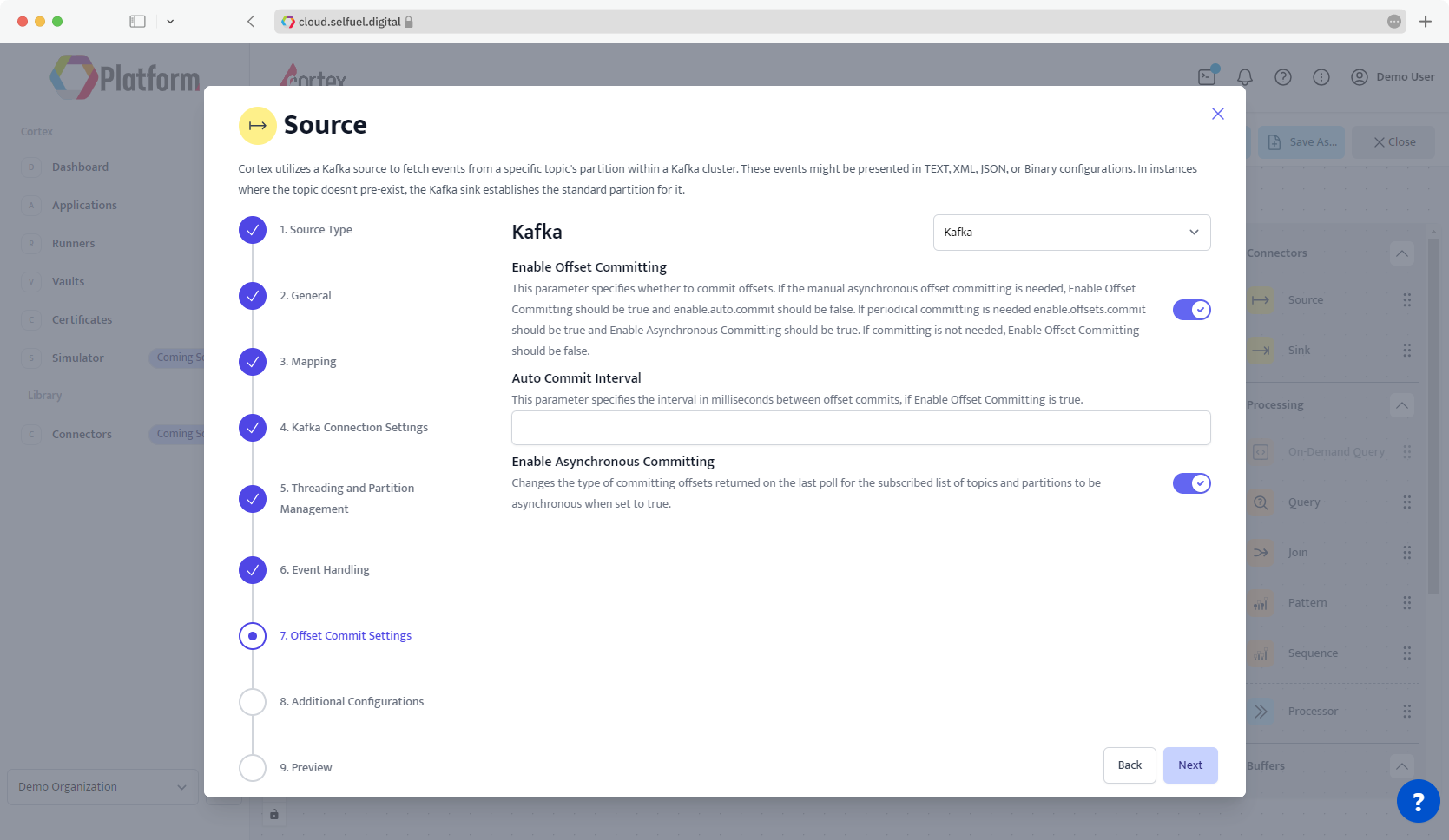

## Step 7 - Offset Commit Settings

### Enable Offset Commiting

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| | STRING |

### Auto Commit Interval

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| | STRING |

### Enable Asynchronous Commiting

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| | STRING |

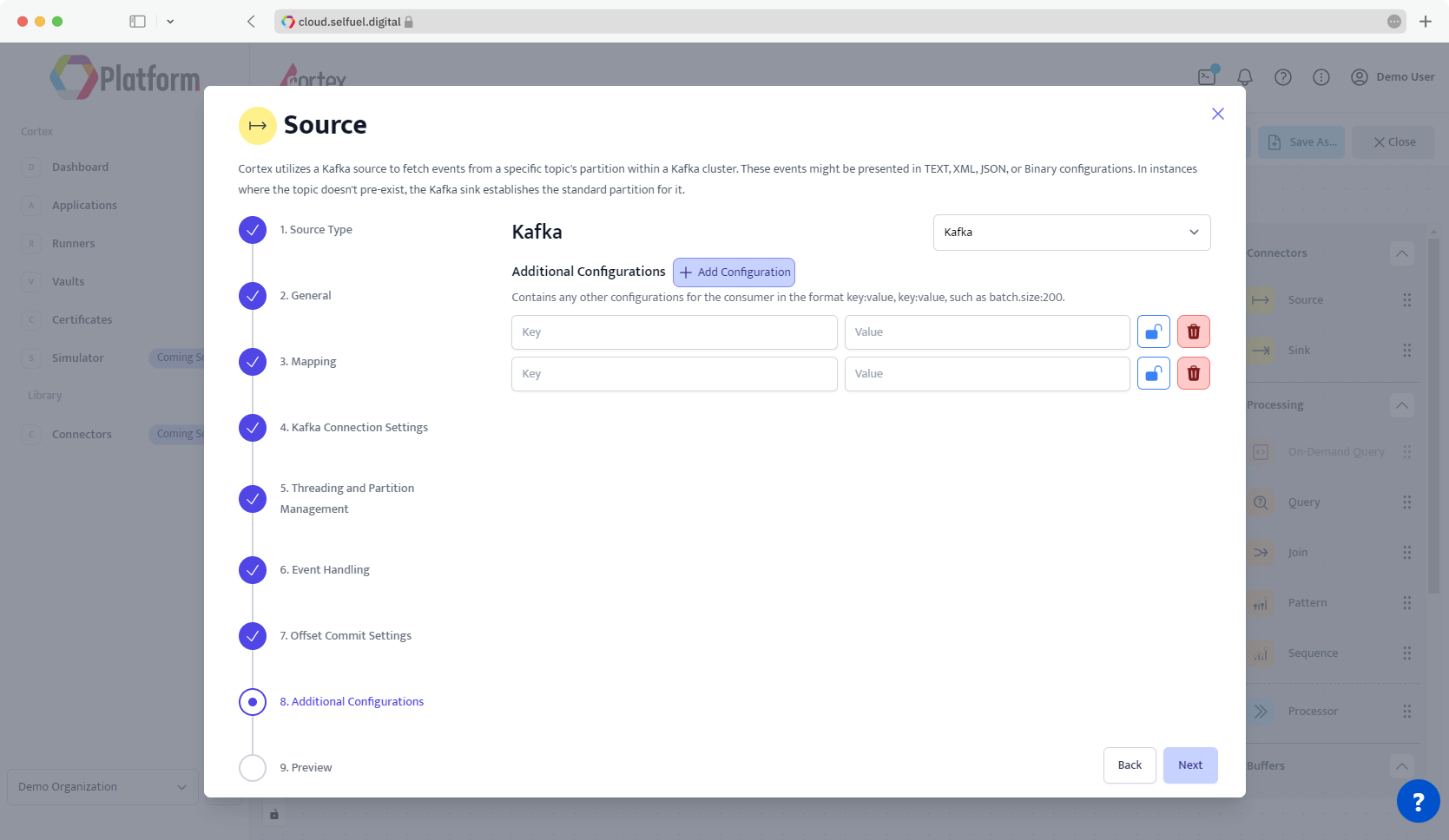

## Step 8 - Additional Settings

### Additional Configurations

Contains any other configurations for the consumer in key-value format. Some supported configurations can be exemplified as

***e.g***. Keystore Type: '`ssl.keystore.type`' as key and '`JKS`' as value.

***e.g.*** Batch Size: '`batch.size`' as key and '`200`' as value.

| Default Value | Possible Data Type |

| ------------- | ------------------ |

| | STRING |

## Step 9 - Preview

In Preview Step, you're provided with a concise summary of all the changes you've made to the Kafka Source Node. This step is pivotal for reviewing and ensuring that your configurations are as intended before completing node setup.

* **Viewing Configurations**: Preview Step presents a consolidated view of your node setup.

* **Saving and Exiting**: Use the Complete button to save your changes and exit the node and return back to Canvas.

* **Revisions**: Use the Back button to return to any Step of modify node setup.

The Preview Step offers a user-friendly summary to manage and finalize node settings in Cortex.